This file needs to be placed in the root directory of your website – the highest-level directory that contains all other files and directories on your website.

The first step is to create a file named robots.txt. Now that we’ve gone over why robots.txt files are important in SEO, let’s discuss some best practices recommended by Google. What Google Says About robots.txt File Best Practices If you have an eCommerce website with a lot of product pages, you might want to only allow Google to index your main category pages.Ĭonfiguring your robots.txt file correctly can help you control the way Googlebot crawls and indexes your website, which can eventually help improve your ranking. If you have a blog with hundreds of posts, you might want to only allow Google to index your most recent articles. If you have a website with a lot of pages, you might want to block certain pages from being indexed so they don’t overwhelm search engine web crawlers and hurt your rankings. The crawl budget is the number of pages Google will crawl on your website during each visit.Īnother reason why robots.txt files are important in SEO is that they give you more control over the way Googlebot crawls and indexes your website. If your website has a lot of pages that are blocked by the robots.txt file, it can also lead to a wasted crawl budget. And too many errors on your website can lead to a slower crawl rate which can eventually hurt your ranking due to decreased crawling.

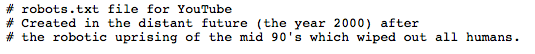

If a web crawler tries to crawl a page that is blocked in the robots.txt file, it will be considered a soft 404 error.Īlthough a soft 404 error will not hurt your website’s ranking, it is still considered an error. Robots.txt file is a file located on your server that tells web crawlers which pages they can and cannot access. What Is a robots.txt File & Why Is It Important in SEO? In this blog post, we’ll be discussing everything you need to know about robots.txt, from what is a robots.txt file in SEO to the best practices to the proper way to fix common issues. This might not seem like a big deal, but if your robots.txt file is not configured correctly, it can have a serious negative effect on your website’s SEO. The robots.txt file is a code that tells web crawlers which pages on your website they can and cannot crawl. But did you know that one of the most important files for your website’s SEO is also found on your server? Each SEO tactic plays into the grand scheme of boosting your page rank by ensuring web crawlers can easily crawl, rank, and index your website.įrom page loading time to proper title tags, there are many ranking signals that technical SEO can help with. Would apply to a URL like /content/biology/./foobar, but not to a URL like /content/biology/foobar nor /content/biology/.Technical SEO is a well-executed strategy that factors in various on-page and off-page ranking signals to help your website rank higher in SERPs. must appear in the URLs you want to noindex. If Noindex works like Disallow (which we don’t know for sure, as Noindex is not specified/documented, but I guess it wouldn’t make sense to specify it differently), the. Of course a bot may try to "fix" this and interpret the following lines to be part of this record (i.e., ignoring the blank lines), but the robots.txt spec doesn’t define this, so I wouldn’t count on it. So none of the following Disallow/ Allow/ Noindex lines apply. Empty lines are used to separate records.Ī conforming bot (which doesn’t identify as Googlebot-Image/ Adsbot-Google/ Mediapartners-Google) uses this record: User-agent: * Empty linesĪ record must not contain empty lines. Google supported it as experimental feature (see: How does “Noindex:” in robots.txt work?), but it’s not clear if that is still the case (as they didn’t document it to begin with). Note that Noindex is not part of the original robots.txt specification.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed